Install on Amazon Web Services

Prerequisites

The instructions assume you have terraform installed on your machine,

or use some automation like Atlantis to run apply for you.

Minimum supported versions for Workflows terraform code:

- Terraform >= 1.4

- AWS Provider >= 4.58.0

(Optional) Grant permissions to deploy

If Aspect CSE is expected to deploy resources, then follow this section.

Create a role to hold our policies. This may be done in Terraform, or in the AWS console:

Navigate to IAM > Roles > Create role

- Select "trusted entity"

- Trusted entity type: AWS account

- Another AWS account:

302232432727(this is Aspect's AWS account number) - Require MFA: enable, as Aspect's engineers are required to use multi-factor auth

- Name, review, and create

- One choice for the name is

aspect-workflows-comaintainers. This name must be known for Aspect engineers to be able to assume the role.

- One choice for the name is

Permissions required for terraform apply

autoscaling:CreateAutoScalingGroup

autoscaling:DeleteAutoScalingGroup

autoscaling:DescribeAutoScalingGroups

autoscaling:DescribeScalingActivities

autoscaling:SetInstanceProtection

autoscaling:UpdateAutoScalingGroup

cloudformation:CreateStack

cloudformation:DeleteStack

cloudformation:DescribeStacks

cloudformation:GetTemplate

cloudwatch:DeleteAlarms

cloudwatch:DescribeAlarms

cloudwatch:ListTagsForResource

cloudwatch:PutMetricAlarm

ec2:AuthorizeSecurityGroupEgress

ec2:AuthorizeSecurityGroupIngress

ec2:CreateLaunchTemplate

ec2:CreateSecurityGroup

ec2:DeleteLaunchTemplate

ec2:DeleteSecurityGroup

ec2:DescribeImages

ec2:DescribeLaunchTemplates

ec2:DescribeLaunchTemplateVersions

ec2:DescribeNetworkAcls

ec2:DescribeNetworkInterfaces

ec2:DescribeRouteTables

ec2:DescribeSecurityGroups

ec2:DescribeSubnets

ec2:DescribeVpcAttribute

ec2:DescribeVpcClassicLink

ec2:DescribeVpcClassicLinkDnsSupport

ec2:DescribeVpcs

ec2:RevokeSecurityGroupEgress

elasticloadbalancing:CreateListener

elasticloadbalancing:CreateLoadBalancer

elasticloadbalancing:CreateTargetGroup

elasticloadbalancing:DeleteListener

elasticloadbalancing:DeleteLoadBalancer

elasticloadbalancing:DeleteTargetGroup

elasticloadbalancing:DescribeListeners

elasticloadbalancing:DescribeLoadBalancerAt

elasticloadbalancing:DescribeLoadBalancers

elasticloadbalancing:DescribeTags

elasticloadbalancing:DescribeTargetGroupAt

elasticloadbalancing:DescribeTargetGroups

elasticloadbalancing:DescribeTargetHealth

elasticloadbalancing:ModifyLoadBalancerAt

elasticloadbalancing:ModifyTargetGroupAt

events:DeleteRule

events:DescribeRule

events:ListTagsForResource

events:ListTargetsByRule

events:PutRule

events:PutTargets

events:RemoveTargets

iam:AddRoleToInstanceProfile

iam:AttachRolePolicy

iam:CreateInstanceProfile

iam:CreatePolicy

iam:CreateRole

iam:DeleteInstanceProfile

iam:DeletePolicy

iam:DeleteRole

iam:DetachRolePolicy

iam:GetInstanceProfile

iam:GetPolicy

iam:GetPolicyVersion

iam:GetRole

iam:ListAttachedRolePolicies

iam:ListInstanceProfilesForRole

iam:ListPolicyVersions

iam:ListRolePolicies

iam:RemoveRoleFromInstanceProfile

lambda:AddPermission

lambda:CreateFunction

lambda:DeleteFunction

lambda:GetFunction

lambda:GetFunctionCodeSigningConfig

lambda:GetPolicy

lambda:ListVersionsByFunction

lambda:RemovePermission

logs:CreateLogGroup

logs:DeleteLogGroup

logs:DescribeLogGroups

logs:ListTagsLogGroup

logs:PutRetentionPolicy

memorydb:CreateCluster

memorydb:CreateSubnetGroup

memorydb:DeleteCluster

memorydb:DeleteSubnetGroup

memorydb:DescribeClusters

memorydb:DescribeSubnetGroups

memorydb:ListTags

s3:CreateBucket

s3:DeleteBucket

s3:DeleteBucketPolicy

s3:DeleteObject

s3:GetAccelerateConfiguration

s3:GetBucketAcl

s3:GetBucketCors

s3:GetBucketLogging

s3:GetBucketPolicy

s3:GetBucketPublicAccessBlock

s3:GetBucketRequestPayment

s3:GetBucketTagging

s3:GetBucketVersioning

s3:GetBucketWebsite

s3:GetEncryptionConfiguration

s3:GetLifecycleConfiguration

s3:GetObject

s3:GetObjectAttributes

s3:GetObjectTagging

s3:GetObjectVersion

s3:GetObjectVersionAttributes

s3:GetReplicationConfiguration

s3:ListAllMyBuckets

s3:ListBucket

s3:ListObjects

s3:PutBucketLogging

s3:PutBucketPolicy

s3:PutBucketPublicAccessBlock

s3:PutEncryptionConfiguration

s3:PutLifecycleConfiguration

s3:PutObject

ssm:DeleteParameter

ssm:DescribeParameters

ssm:GetParameter

ssm:ListTagsForResource

ssm:PutParameter

sts:AssumeRole

sts:GetCallerIdentity

Choose an Amazon Machine Image (AMI)

Aspect Workflows CI runners are EC2 instances, not Kubernetes pods. Therefore, the base image uses an AMI. To help you get started with Workflows, Aspect provides a number of starter AMIs.

Aspect Workflows starter AMIs are currently built on the following Linux distributions,

- Amazon Linux 2 (al2)

- Amazon Linux 2023 (al2023)

- Debian 11 "bullseye" (debian-11)

- Debian 12 "bookworm" (debian-12)

- Ubuntu 20.04 "Focal Fossa" (ubuntu-2004)

for both amd64 (x86_64) and arm64 (aarch64) and come in the following variants,

- minimal (just the bare minimum Workflows deps for fully hermetic builds)

- gcc (minimal + gcc)

- docker (minimal + docker)

- kitchen-sink (minimal + gcc, docker and other misc tools)

These are currently available in us-east-1, us-east-2, us-west-1 and us-west-2. Please contact us if you would like us to publish starter AMIs to additional regions.

To query the list of all Aspect Workflows starter images, run the following command:

aws ec2 describe-images --owners 213396452403 --filters "Name=name,Values=aspect-workflows-*" --query 'sort_by(Images, &Name)[].Name'

This bit of terraform can be used to locate an AMI at plan/apply-time:

data "aws_ami" "aspect_runner_ami" {

most_recent = true

owners = ["213396452403"] # Public Aspect Workflows images AWS account (workflows-images)

filter {

name = "name"

values = ["aspect-workflows-<al2|al2023|debian-11|debian-12|ubuntu-2004>-<minimal|gcc|docker|kitchen-sink>-<amd64|arm64>-*"]

}

}

The packer scripts we use to build Aspect Workflows starter images are open source and can be found at https://github.com/aspect-build/workflows-images.

In a later setup stage, we'll discuss how to build a custom AMI if required to green up your build.

Add the terraform module

Our terraform module is currently delivered in an S3 bucket. You add it to your existing Terraform setup.

Here's an example:

module "aspect_workflows" {

# Replace 5.x.x with an actual version:

source = "https://aspect-artifacts.s3.us-east-2.amazonaws.com/5.x.x/workflows/terraform-aws-aspect-workflows.zip"

# Replace my-region with the AWS region you're deploying to

# (S3 buckets are global, but terraform uses an API that can't do cross-region reads)

aspect_artifacts_bucket = "aspect-artifacts-[my-region]"

# Ask us to generate a customer ID for your organization and input it here

customer_id = "MyCorp"

vpc_id = data.terraform_state.outputs.circleci_vpc.vpc_id

vpc_subnets = [data.terraform_state.outputs.circleci_vpc.private_subnets[0]]

# Replace XXX with one of gha, cci, bk

hosts = ["XXX"]

# Define Bazel states we know how to warm up

warming_sets = {

default = {}

}

# Define any customizations for the remote cache here.

remote_cache = {}

resource_types = {

"default" = {

# One or more instance types can be specified via 'instance_types'.

# Specifying multiple instance types allows Workflows to scale out when demand

# for a particular instance is high, or can not be fulfilled.

instance_types = ["i4i.xlarge", "i4i.2xlarge"]

image_id = data.aws_ami.aspect_worker_ami.id

}

}

# Replace XXX with one of gha, cci, bk

XXX_runner_groups = {

default = {

max_runners = 10

resource_type = "default" # Corresponds to a resource_types entry above

warming = true

warming_set = "default" # Corresponds to a warming_sets entry above

...

}

warming = {

max_runners = 1

resource_type = "default" # Corresponds to a resource_types entry above

policies = {

# "default" key in warming_management_policies corresponds to a warming_sets entry above

warming_manage : module.aspect_workflows.warming_management_policies["default"].arn

}

warming_set = "default" # Corresponds to a warming_sets entry above

...

}

}

}

(Optional) Apply custom security groups to runners

Some organizations have a policy that requires adding custom security groups to the runners that are managed by Aspect workflows.

This can be achieved by setting the security_groups attribute on the runners or queue configuration object.

This is a map from string -> AWS Security Group ID (the name is not currently used by Workflows).

runners = {

default = {

...

security_groups = {

vpn_access : aws_security_group.vpc_access.id

}

...

}

}

Allowing Aspect read-only support access

The Workflows module takes an optional support.support_role_name configuration option that Workflows attaches policies to,

which provide read-only access to key logs, metrics and configuration values.

The policies are only created and attached if the role is given; Workflows does not create a role automatically to add these policies too.

Specifically, the policy defined in this document allows:

- Read / List on all

/awSSM parameter store keys - Describe on all ASGs and their associated instances and the scaling activity

- Get on log streams and log events with the

aw_prefix - SSM access to running instances and port forwarding for Grafana

For example:

resource "aws_iam_role" "support" {

name = "AspectWorkflowsSupport"

...

}

module "workflows" {

...

support = {

support_role_name = aws_iam_role.support.name

}

...

}

Allowing Aspect privileged support access

The Workflows module takes an optional support.operator_role_name configuration option that Workflows attaches policies to, that provide privileged access to key resources.

This role is a super-set of the above read-only support access role.

The policies are only created and attached if the role is given; Workflows does not create a role automatically to add these policies to.

Specifically, the policy defined in this document allows:

- Manage Aspect build runner EC2 hosts, specifically by rebooting, stopping, and terminating.

- Delete S3 objects, only in specific Aspect-managed buckets.

- Manage the Redis cache, including updating/deleting the cluster, and creating snapshots.

For example, it could be extended via:

resource "aws_iam_role" "operator" {

name = "AspectWorkflowsOperator"

...

}

module "aspect_workflows" {

...

support = {

operator_role_name = aws_iam_role.operator.name

}

...

}

In addition, Workflows can also enable SSM access to key resources which is available via the operator role only.

To enable SSM access, set the following property in the support configuration. By default, SSM access is turned off.

module "aspect_workflows" {

...

support = {

enable_ssm_access = true

}

...

}

Apply

Run terraform apply, or use whatever automation you already use for your Infrastructure-as-code such as Atlantis.

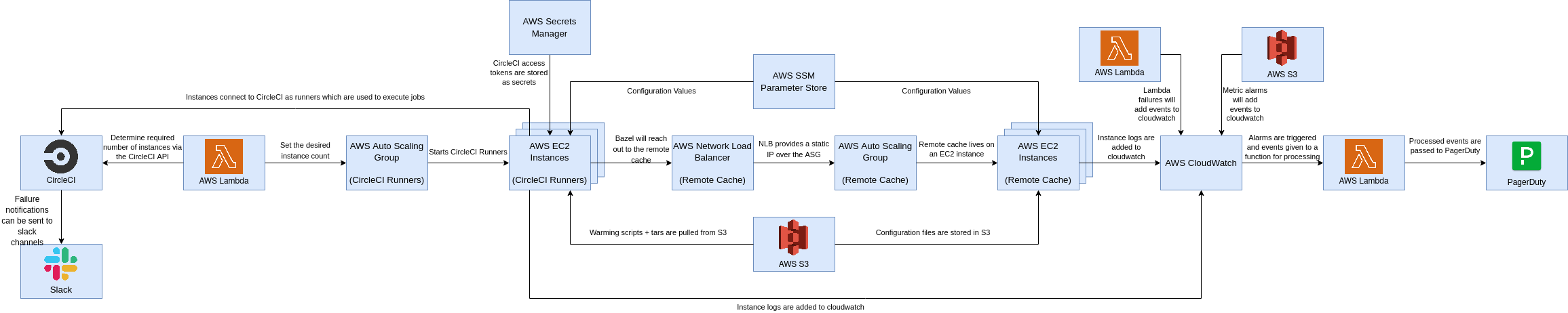

You'll get a resulting infrastructure like the following:

Next steps

Continue by choosing which CI platform you plan to interact with, and follow the corresponding installation steps.